Around the world many initiatives are followed in education that have not been carefully scrutinised much less held accountable to empirical evidence. This first became clear to me when I began my teacher training many years ago, and I was taught about the importance of differentiating lessons according to students’ preferred learning styles (Visual, Auditory, Kinaesthetic – VAK). I was also instructed that ‘best practice’ constituted minimising the teaching of facts, implying that facts are much less important than “understanding”. Teacher-led teaching I came to believe, led to passivity in the classroom. Teachers should, as the rhetoric was put across, serve as no more than facilitators for student learning, to encourage and guide students to discover for themselves. As I saw what works in the classroom in terms of how humans learn, and I delved into the actual research, it became clear that while such ideas may have enough surface level plausibility to sound convincing, they do not actually have any empirical support.

This is not to say that all of these ideas are completely flawed. However, I do believe that it is beneficial for the education of our students, to carefully scrutinise some of the most commonly held assumptions in education, by holding them up to the light of empirical analysis. Based on a thorough enquiry into the myths in education, and following my reading of several excellent books on the subject (referenced below), I present here my notes and personal observations of the most persistent myths in education today:

Myth 1 – Teacher-led fact-giving should be avoided in favour of allowing students to “discover things for themselves”.

There is a general rhetoric in education that goes something like this: “Learning should not be a matter of stuffing a person’s head full of facts, but rather a process of lighting a fire in people so they have the confidence to learn independently.” In other words, the teacher should be a facilitator for student learning rather than a “fact-giver”. Instead of the teacher transmitting knowledge to students, it is widely considered best teaching practice for students to discover things for themselves. This idea can be traced back to the work of the American psychologist, Jerome Bruner in the 1960s. Bruner advocated a pedagogy in which the learner interacts with the material to be learned in an active and self-investigator manner, to encourage children to think and solve problems independently. Inquiry-based learning, problem-based learning and project-based learning can all be seen to have been born from Bruner’s work.

There are three main assumptions here, which justify such approaches to teaching and learning:

1. Concepts are more fully remembered.

2. Learners are more motivated to learn if they have to discover by themselves.

3. By discovering for themselves, learners will be more easily able to find solutions in new problem situations.

But to what extent are each of these assumptions actually true? Although, as I will explain, some degree of discovery learning can be useful, it should not be used in isolation, and it should only be used in certain situations. Let’s look at why, by asking questions of each one of these assumptions:

1. Are concepts more fully remembered by allowing students to “discover things for themselves”?

It all depends on learners’ previous knowledge. This is because conceptual understanding is only possible by knowing facts – and usually, lots of facts. As Daisy Christodoulou (2014, pp. 20) points out, we know that long-term memory is capable of storing thousands of facts, and when these are memorised around a particular topic, these facts together form what is called a ‘schema’. When we meet new facts about that topic, we assimilate them into that schema – and if we already have a lot of facts in that particular schema, it is much easier for us to learn facts about that topic. To put it another way, factual knowledge makes cognitive processes work better.

Daisy’s example clarifies this well:

‘Critics of fact-learning will often pull out a completely random fact and say something like: who needs to know the date of the Battle of Waterloo? What does it matter? Of course, pulling out one fact like this on its own does seem rather odd. But the aim of fact-learning is not to learn just one fact – it is to learn several hundred, which taken together form a schema that helps you to understand the world. Thus, just learning the date of the Battle of Waterloo will be of limited use. But learning the dates of 150 historical events from 3000 BC to the present day and learning a couple of key facts about why each event was important will be of immense use, because it will form the fundamental chronological schema that is the basis of all historical understanding.’

As Hirsch (2017, pp. 79) states, many educators in education now deplore the “memorising” of mere facts. According to this line of thought: “We need less memorisation of facts, and more emphasis on critical thinking skills for the 21st century”. Hirsch highlights this as a fallacy though, by presenting evidence clearly showing that a well-stocked mind (through knowing lots of facts) is the skill of skills – essential to critical thinking and to looking things up:

‘Critical thinking does not exist as an independent skill. Cognitive scientists have shown since the 1940s that human skills are domain specific, and do not transfer readily from one domain to the next. No matter how widely skilled people may be, as soon as they confront unfamiliar content their skill degenerates. An unfamiliar topic will quickly degrade both reading and writing. The domain specificity of skills is one of the most important scientific findings of our era for teachers and parents to know about.’

2. Are learners more motivated to learn if they have to discover by themselves?

Not necessarily. It stands to reason that as long as the subject matter is presented to learners in an engaging way, learners will be just as motivated to learn in a direct-instruction-based lesson as they would be through discovery learning.

There are two more related problems with these first two assumptions about discovery learning, particularly for younger learners – and especially in the domain of natural science. Piaget long ago made clear that younger learners see the world differently from adults (let alone scientists), interpret and understand it differently, and are not capable of carrying out the abstract cognitive transformations necessary for fruitful knowledge construction as it occurs in the sciences (Pedro de Bruyckere et. al, 2015, pp. 50).

Hattie and Yates (2013, pp. 78) add a further problem, closely related to the importance of prior knowledge:

‘… several studies have found that low ability students will prefer discovery learning lessons to direct-instruction-based lessons, but learn less from them. Under conditions of low guidance, the knowledge gap between low and high ability students tend to increase. The lack of direct guidance has greater damaging effects on learning in low ability students especially when procedures are unclear, feedback is reduced, and misconceptions remain as problems to be resolved rather than errors to be corrected.’

3. By discovering for themselves, will learners more easily be able to find solutions in new problem situations?

As with critical thinking, problem solving is another all-purpose skill that is proffered a lot in education. According to Hirsch (2017, pp. 84): ‘There exists no consistent all-purpose problem-solving skill, independent of domain-specific knowledge.’ There is now plenty of research and evidence to demonstrate that even when people are shown how to solve a problem in one domain, they tend to be baffled by a similar problem in another domain. Learners, especially the younger they are, need active guidance from the teacher through knowledge transmission.

Einstein was once reported as having said “Imagination is more important than knowledge.” As Willingham (2009, pp. 46) points out though, if Einstein did actually say this, he was wrong: ‘Knowledge is more important, because it’s a prerequisite for imagination, or at least the sort of imagination that leads to problem solving, decision making, and creativity.’ It is clear that the cognitive processes, which are most esteemed, are intertwined with knowledge.

Summary…

Evidently, the pure discovery approach to learning is simply not as effective compared to when students are guided by a teacher to the intended learning outcomes. Even Bruner himself, some years later, replaced the concept of “discovery learning” with “guided discovery”. Guided discovery, in which each step of learners’ independent enquiry is scaffolded, should go hand in hand with a certain amount of knowledge transmission from the teacher. Problem-based learning, for example, is simply inappropriate for acquiring new knowledge or insights. However, it could be useful for applying and honing existing skills and for making connections between different concepts.

Pedro de Bruyckere et. al (2015, pp. 51) assert that, as with many other educational initiatives, the effectiveness of discovery learning is dependent on the target group, the objectives and the subjects. For novice learners, pure discovery learning should never be the method, although it may be a goal. Experts, on the other hand, possess sophisticated schemas in long-term memory, allowing them to deal differently with problems and solve them in different ways. The more novice the learner is then, the more important support and guidance are, to the point where, for experts in a domain, discovery learning might well be effective.

Myth 2 – Knowledge and skills are distinct.

Daisy Christodoulou makes the strong case that knowledge and skills are intertwined, and they are intertwined to such an extent that it is not really possible to separate out skills and teach them on their own. Skill progression depends upon knowledge accumulation. For example, building knowledge by committing facts to memory (e.g., the alphabet), allows learners to improve their communication skills. Likewise, learning the skill of communicating another language requires knowledge of vocabulary and grammar. Learning all of the 12 times tables, and learning them all so securely that we do not have to think of the answer when the problem is presented, is the basis of mathematical skill (and understanding). Learning the skill of building websites requires knowledge of HTML and CSS. Learning the skill of driving requires knowledge of road signs and conditions. The list of examples can go on and on – basically, all critical thinking processes are tied to background knowledge. In all domains, considerable knowledge is found to be an essential prerequisite to expert skill.

Gibson (1998, pp. 46-47) gives the example of Shakepeare’s education, which shows just how closely knowledge and skills are connected:

‘Shakespeare’s education at Stratford-upon-Avon Grammar School gave him a thorough grounding in the use of language and classical authors. Although his schooling might seem narrow and severe today (schoolboys learned by heart over 100 figures of rhetoric), it proved an excellent resource for the young playwright. Everything Shakespeare learned in school he used in some ways in his plays. Some of his early plays seem to have a very obvious pattern and regular rhythm, almost mechanical and like clockwork. But having mastered the rules, he was able to break and transform them… On this evidence, Shakespeare’s education has been seen as an argument for the value of learning by rote, of constant practice, of strict rule-following. Or, to put it another way, ‘discovery favours the well-prepared mind’. His dramatic imagination was fuelled by what would now be seen as sterile exercises in memorisation and constant practice. What was mechanical became fluid, dramatic language that produced thrilling theatre.’

Clearly, Shakespeare’s skill as a playwright came from the way he used the knowledge he had gained. As Daisy Christodoulou (2015, pp. 22) argues, ‘a fact-filled education did not stifle Shakespeare’s genius; on the contrary, this education allowed that genius to flourish’. The same of course can be said to be true of the fact-filled education received by many great writers, scientists, policy-makers, economists and inventors (and thousands of others) – who have made enormous positive contributions to the world:

‘By assuming that pupils can develop chronological awareness, write creatively or think like a scientist without learning any facts, we are guaranteeing that they will not develop any of those skills’ (Christodoulou, 2015, pp. 22). The idea that teaching strategies for analysing or thinking critically will allow our learners to exercise their skills of analysis or critical thinking therefore, is flawed.

Myth 3 – New technology always improves students’ learning.

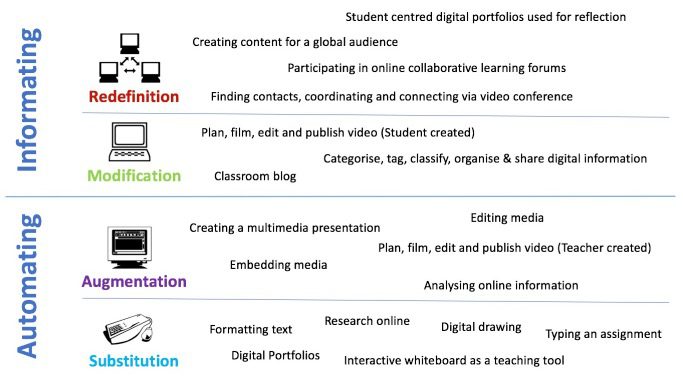

This is a myth because it entirely depends on how the technology is integrated into teaching and learning. Clark and Feldon (2014) for example, confirm that the effectiveness of learning is determined primarily by the way the medium is used and by the quality of the instruction accompanying that use. To take their idea further, it is worth exploring the work of Dr. Ruben Puentedura, who has developed a practical framework, which he calls the SAMR model, to show the impact of technology on teaching and learning. The model moves through various stages, beginning at a basic level of learning in the substitution phase through to a level where learning is transformational at the redefinition level.

The SAMR model is powerful because it enables us to think about how learning can be extended through the use of technology. The four stages of the SAMR model are summarised here:

SUBSTITUTION – Technology acts as a direct tool substitute, with no functional change. For example, students may type up notes on a word processor instead of writing by hand in an exercise book.

AUGMENTATION – Technology still acts as a direct tool substitute, but with functional improvements. Taking the example of typing on a word processor, augmentation means that the learning process can become more efficient and engaging. Images can be added, text can be hyperlinked and changes to the text itself can be made quickly.

These first two stages of the SAMR model represent enhancements of existing ways of working. Digital technology is not necessary in order to carry out the learning task. The technology simply provides a digital medium for learning to take place, which may enhance learning.

MODIFICATION – By this stage technology not only enhances the learning activity, it also significantly transforms it. An example might be students setting up a blog in which they open up their work to a worldwide audience. The blog means that students are much more accountable for the work they present, so will tend to spend more time refining their written work. In this way, both student learning and literacy improve.

REDEFINITION – This level requires the teacher to think about learning activities that were previously inconceivable without the use of technology. This could be for instance, a Google Hangout session that takes place between students from different countries in order for students to swap information about their home countries in real-time. Likewise, the use of Google Docs for students in different parts of the world to collaborate on a shared assignment facilitates learning opportunities that would be impossible without such technology.

The modification and redefinition levels represent transformational stages in terms of student learning, as the technology is actively helping to transform the way in which learning can occur.

The SAMR model is essentially a planning tool that helps to design better learning activities for students. The framework provides pedagogical insight into how technology can and should be used in the classroom. New technology in and of itself will not necessarily improve students’ learning outcomes. In order to get the most out of technology for learners, I would make the following recommendations in light of the SAMR model:

- Always consider whether or not the technology improves the learning process. If the learning process is enhanced through the use of technology, then it’s appropriate to use – if not, more traditional (analogue) methods can work just as well (if not better).

- Collaboration is extremely important, particularly if you are looking at learning from a social constructivist perspective. Consider how you can use technology to facilitate collaboration.

- Ensure that you use technology to expose students to the outside world. This not only helps to improve their cultural understanding and international-mindedness, it can be great for building key literacy skills.

Myth 4 – Young people read less than they did before there was so much access to technology.

As Kevin Kelly (2016) states, ‘To everyone’s surprise, ultra thin screens and tablets have launched an epidemic of reading and writing that has continued to swell. The amount of time people spend reading has almost trebled since 1980.’ Moreover, according to Pedro de Bruyckere et. al (2015, pp. 151), young people are still doing a lot of reading, and statistics make it clear that a lot of them are reading for pleasure.

Myth 5 – Boys benefit if they have male teachers.

All the evidence available points to the fact that the gender of the teacher has little or no effect on the learning performance of boys in school (Pedro de Bruyckere et. al, 2015, pp. 183). Contrary to popular belief, boys do not benefit in any measurable way from having a male teacher. This is important to note, since according to statistics from the Department for Education, approximately only 1 in 4 teachers are men in England – accounting for 38% of secondary and 15% of primary schools. Using data provided by the World Bank, the figures look similar across Europe and North America; female representation in teaching markedly outweighs the number of men in the profession. There is a significant amount of media attention given to highlight such figures and attract more men into the profession. As it turns out, this focus on there not being enough men in teaching is unnecessary.

Myth 6 – Teaching is best delivered in a format that matches students’ learning preferences.

There is absolutely no evidence for this statement. First, evidence from Clark (1982) shows that learners who have reported preferring a particular instructional technique typically derive little benefit from experiencing it. The second problem deals with the concept of learning styles itself. The assumption that people can be classified into distinct learning types receives little to no support from objective studies. As Pedro de Bruyckere et. al (2015, pp. 21) state: ‘Most people do not fit one particular style; the information used to assign people to styles is often inadequate; and there are so many different styles that it becomes cumbersome to link particular learners to particular styles.’ Rohrer and Pashler (2012, pp. 117) summarise it as follows: ‘The contrast between the enormous popularity of the learning style approach and the lack of any credible scientific proof is both remarkable and disturbing’.

Myth 7 – Cognitive performance can be enhanced through “brain training”.

In recent years, “brain training” has become big business around the world. As well as brain training being incorporated into the curriculum of many schools, several software companies have been quick to develop Brain Games with the promise of helping to improve everything from problem-solving skills, hand-eye coordination, memory to a whole range of other cognitive abilities. However, regular practice with the so-called “brain games” have not been shown to significantly improve cognitive functioning. Adrian Owen of the Cognition and Brain Sciences Unit at Cambridge University and his colleagues published their findings in the journal Nature. Their report concludes: ‘… regular brain training confers no greater benefit than simply answering general knowledge questions using the Internet’.

As Pedro de Bruyckere et. al (2015, pp. 110) state:

‘The word “brain” is misleading since any training necessarily involves the brain. There is as yet no evidence at all that brain training that is aimed at improving general cognitive abilities such as fluid intelligence will in any way be effective.

In October 2014, 73 psychologists, cognitive scientists and neuroscientists from around the world signed an open letter stating that companies marketing “brain games” that are meant to slow or reverse age-related memory decline and enhance other cognitive functions are exploiting customers by making “exaggerated and misleading claims” that are not based on sound scientific evidence.’

The implication for education is simple – there is no general training method that can help improve cognitive performance.

Concluding thoughts…

As we have seen, various educational initiatives and theories have gained momentum under the fundamentally false premise that they will improve students’ learning outcomes. At best, these myths embedded within education, create an unnecessary distraction for students (e.g. “brain training”) and at worst, they can lead to significant amounts of teaching time being lost on practices that are simply ineffective (e.g. “discovery learning”).

In particular, there has been a strong focus in recent years on developing very broad skills: “analytical”, “critical thinking” and “problem-solving skills” – while minimising the importance placed on ‘knowledge’ and ‘facts’. This approach, however, puts the cart before the horse; skills can only be developed in line with knowledge accumulation.

It makes no sense to explicitly attempt to teach “21st century skills”. Cognitive science has shown that all students will develop their skills naturally, hand in hand with knowledge and hard work. Learning should be hard if it is to be effective. As Vygotsky stated in the last century, real learning takes place in a learner’s zone of proximal development: that narrow region where learning is hard but not too challenging.

It is worth emphasising that for the most part, teachers already do an excellent job of creating challenging lessons. In addition, teachers do many other things that we know are not myths: provide timely feedback, get students excited about the subject matter, go over worked examples, review previous learning, provide scaffolds for difficult tasks – and much more. All of these things that teachers do on a regular basis have plenty of scientific evidence, which shows they are effective for improving students’ learning. It is simply important to apply common sense in education, to scrutinise educational practices and hold them up to the light of empirical evidence.

References:

Bruyckere, P., Kirschner, P., & Hulshof, C. (2015). Urban Myths About Learning & Education.

Christodoulou, D. (2013). Seven Myths About Education.

Clark, R. E. (1982). Antagonism between achievement and enjoyment in ATI studies. Educational Psychologist, 17(2), 92 – 101.

Clark, R. E., & Feldon, D. F. (2014). Ten common but questionable principles of multimedia learning.

Gibson, R. (1998). Teaching Shakespeare.

Hattie, J., & Yates, G. C. (2013). Visible learning and the science of how we learn.

Hirsch, E.D. Jr. (2017). Why Knowledge Matters – Rescuing Our children From Failed Educational Theories.

Kelly, K. (2016). The Inevitable – Understanding the 12 technological forces that will shape our future.

Puentedura, R. (2014). https://sites.google.com/a/msad60.org/technology-is-learning/samr-model

Rohrer, D., & Pashler, H. (2012). Learning styles: Where’s the evidence? Medical Education, 46, 630 – 635.

Willingham, D. (2009). Why Don’t Students Like School?